Restoring Media Assets by Harnessing the Power of Machine Learning

- Product Development /

Recently, I restored a bunch of old macOS (OS X) desktop backgrounds from OS X 10.6 Snow Leopard (2006) on my personal blog, upscaling them from their original 1920 x 1200 resolutions to 5k, likely much higher than even the original photos ever existed. It made a minor splash. The big question I kept getting asked was, how do did I do it?

Previously taking on a project like this, would have required tens of hours restoring them by hand painting in lost details to achieve similar results per photo, whereas today it a fraction of that, spending only an hour or so per photo as I being meticulous and still performing minor touch ups. Now we’re in the era of machine learning which can do a lot of dirty work for us.

Explaining machine learning for the non-programmers of the world generally causes one’s eyes to glaze over, explaining training data, neural networks, probabilistic reasoning and so forth but it has become so ubiquitous even non-programmers encounter it’s shorthand, ML. Rather than discuss the ins and outs of machine learning, how its shaped speech recognition software or computer vision, we can make it work for us without a deep understanding.

In recent years, my personal and professional life has shifted away from Adobe Creative Cloud to a more varied and diverse software set. The hardest application for me to replace was Photoshop, as I’ve been using Photoshop since version 3.0 as a kid, as in the PowerPC Photoshop 3.0 from the early 90s, not CS 3, before Photoshop could be used as an adjective.

Today there are viable alternatives to Photoshop and my personal favorite is Pixelmator Pro, a much more nimble image editor that packs in some game changing features, many of which are based on machine learning, like ML Super Resolution. These might not be that big of a deal if they took an esoteric knowledge set to use. Instead they only take a single click of a mouse.

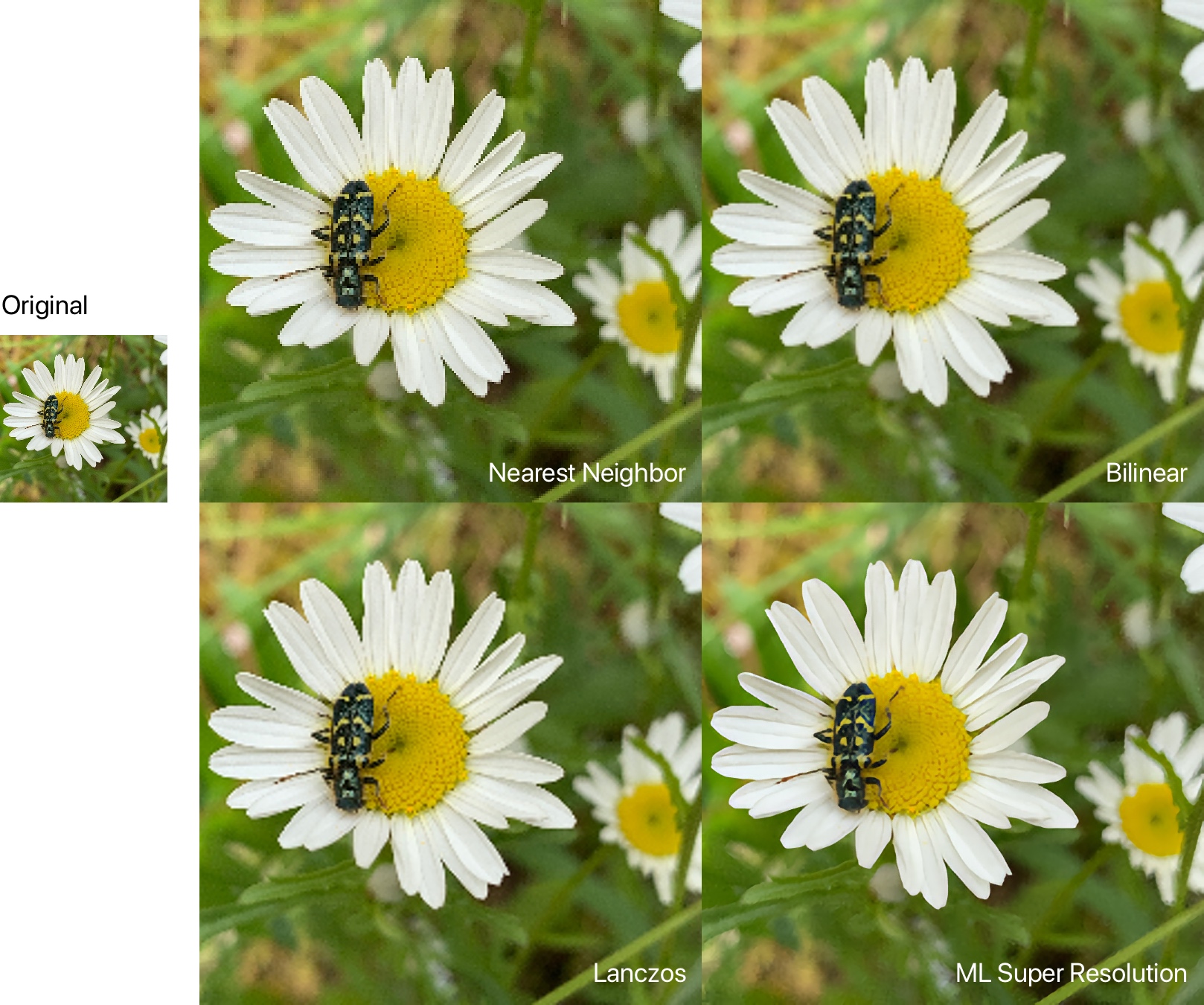

One of the very frequent tasks computers do is upscaling raster images, aka zooming in or increasing the size of an image. This happens when you zoom in on an image in an application like Preview or Photoshop zoom in on a web browser or pinch and zoom on your iPhone or play a lower resolution video on a high resolution screen like 1080p video on a 4k monitor.

Upscaling has come a long way in the past few years, with machine-learning-assisted upscaling algorithms. These use tricks like taking into account the hue/luminosity (color and its brightness) of surrounding pixels and filling in what it believes the new pixels should be. Before machine-learning, upscaling meant duplicating pixels (nearest neighbor) or duplicating pixels and creating transitions between the hue/luminosity use an algorithm (bilinear or lanczos). If you have a sharp line, perhaps a sharp mountain silhouette against a sunset (or in my case, a flower and a bug), the machine-learning algorithm will “notice” the sharp contrast between the two areas as it has been “trained” to do so. It will then infer that it should try and keep the sharpness rather than lose the detail, when it creates new pixels to fill the space from upscaling.

Machine learning will not provide as good as results as using higher resolution image, but when you do not have the option of a higher resolution source Machine learning will provide much better results.

Pixelmator Pro’s ability to treat images with machine learning isn’t limited to increasing resolution, it also can be used to remove compression artifacts or noise from low low light, results of highly compressing an image with a lossy format like JPEG. Lossy image formats sacrifice image quality to save space. The more an image is compressed to save disk space/bandwidth, the more apparent the compression becomes. This isn’t nearly as pronounced as super resolution. The gradients with ML denoise in example below are subtle but noticeable in the sky especially.

Again, ML isn’t a replacement for a higher quality image but ML can be used to help restore some of the original quality. Machine learning isn’t without it’s faults. ML denoise can reduce the sharpness on grainy details. In cases like this, masking areas of the image is still useful.

ML can also be used to make decisions on color correction. While, I wouldn’t say this is as impressive as ML Super Resolution and ML Denoise, it does provide an auto-leveling that’s generally better than auto leveling in Adobe Lightroom. It generally makes better decisions. It works great for quick and dirty color correction.

With minimal effort, an old image asset can be transformed from a dated low resolution image to one that’s more fit for a modern web asset.

Pixelmator Pro isn’t the only software that offers ML upscaling, there’s software packages like Gigapixel AI that provides more advanced image upscaling and cloud based image scaling programs meant for batch asset upscaling. The results vary based on product/service and often how much time you’re willing to invest.

We’re right now at the midst of machine learning image processing, as both AMD and Nvidia offer competing real-time machine learning upscaling for PC gaming in Windows. It’s much easier for a GPU render a game at a lower resolution, like 1440p and upscale it to 2160p (4k) than it is to render the game natively at 4k. Apple since the iPhone 11 has been packing in image processing into it’s camera dubbed “deep fusion” to sweeten photos the user takes, picking the the optimal frame as every press of the camera takes multiple photos even when Live Photo is disabled, and performing color corrections, fixing lens distortion and camera noise corrections.

There may be a time in the near future where ML upscaling is performed as part of the operating system. The OS or web browser could upscale low resolution photos or video and perform lighting fast corrections to make images and video appear sharper/more detailed. Due to the computing resources something like this would take, this is probably far off but perhaps not as far off as one might think.

Until then, we can use products like Pixelmator Pro, GigaPixel and many other machine learning assisted tools to restore our old media assets.